How your Claude Code experience will probably go

I started using Claude Code about 6 months ago as an experiment because I wanted to know what the hype is about. I must say the hype is well warranted. My main argument at the time was that the same model is available in tools like Cursor as well completely fell flat. You truly understand the importance of the harness once you go from a tool like Cursor to Claude Code.

I have seen a fair bit being written about how someone uses it, although I realise after talking to a lot of users that every user goes through a journey of building trust with the product, and I don't see enough being talked about. So I decided to write this.

The initiation

Unlike other products, there's no free plan available in Claude Code. I personally wasn't ready to pay the $20, although I had $10 lying around in Anthropic API credits from an experiment I was conducting with their API. So I went ahead with it.

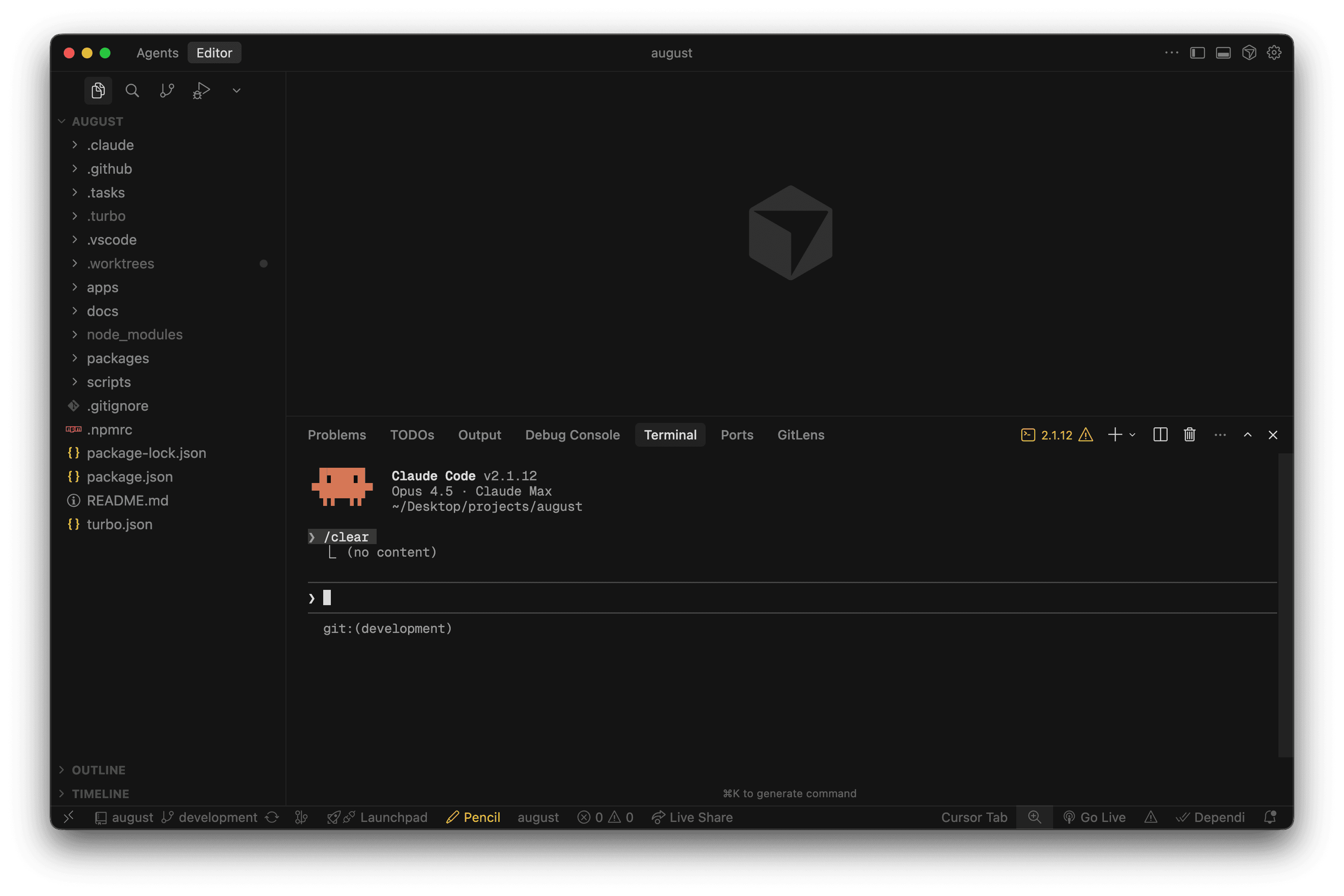

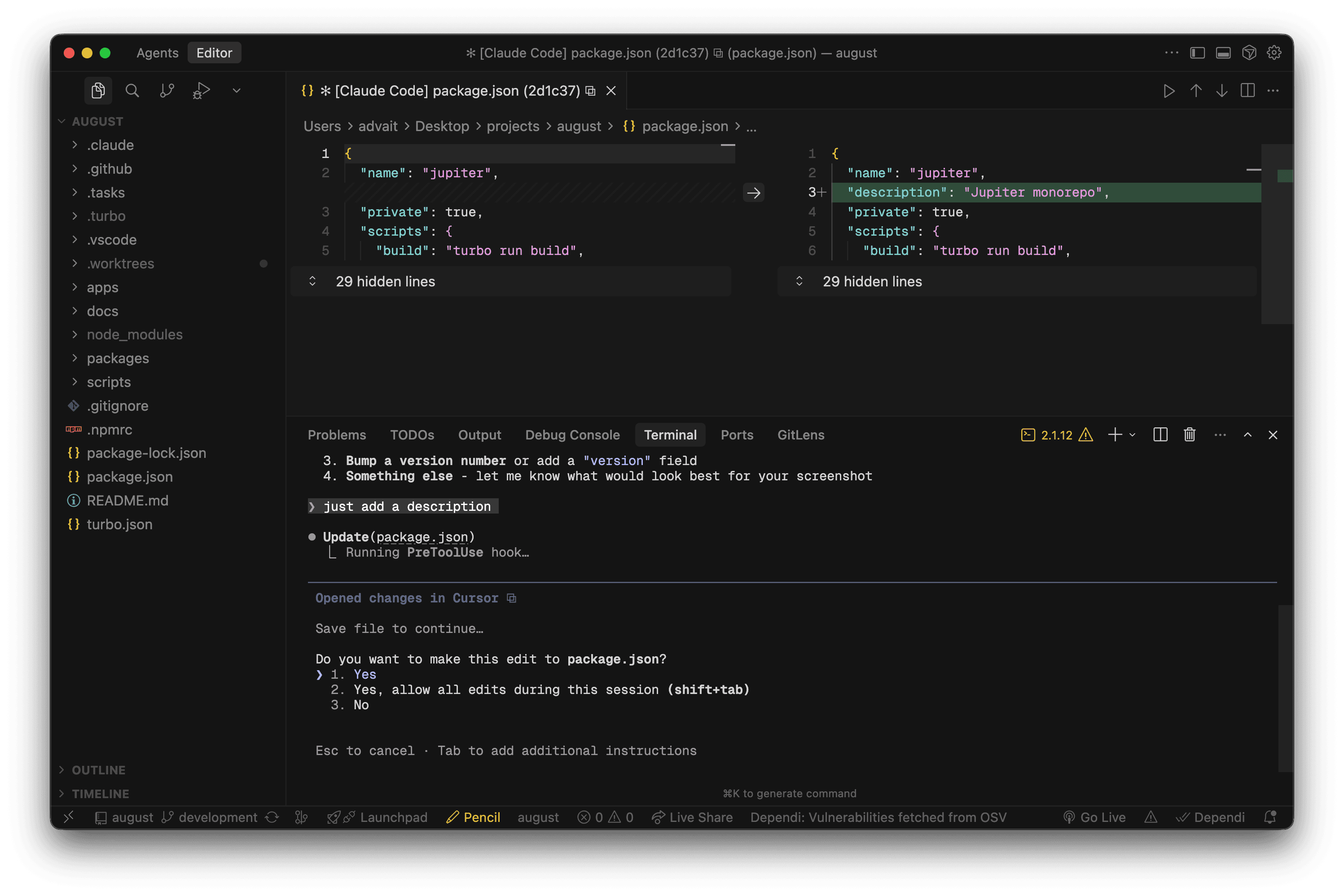

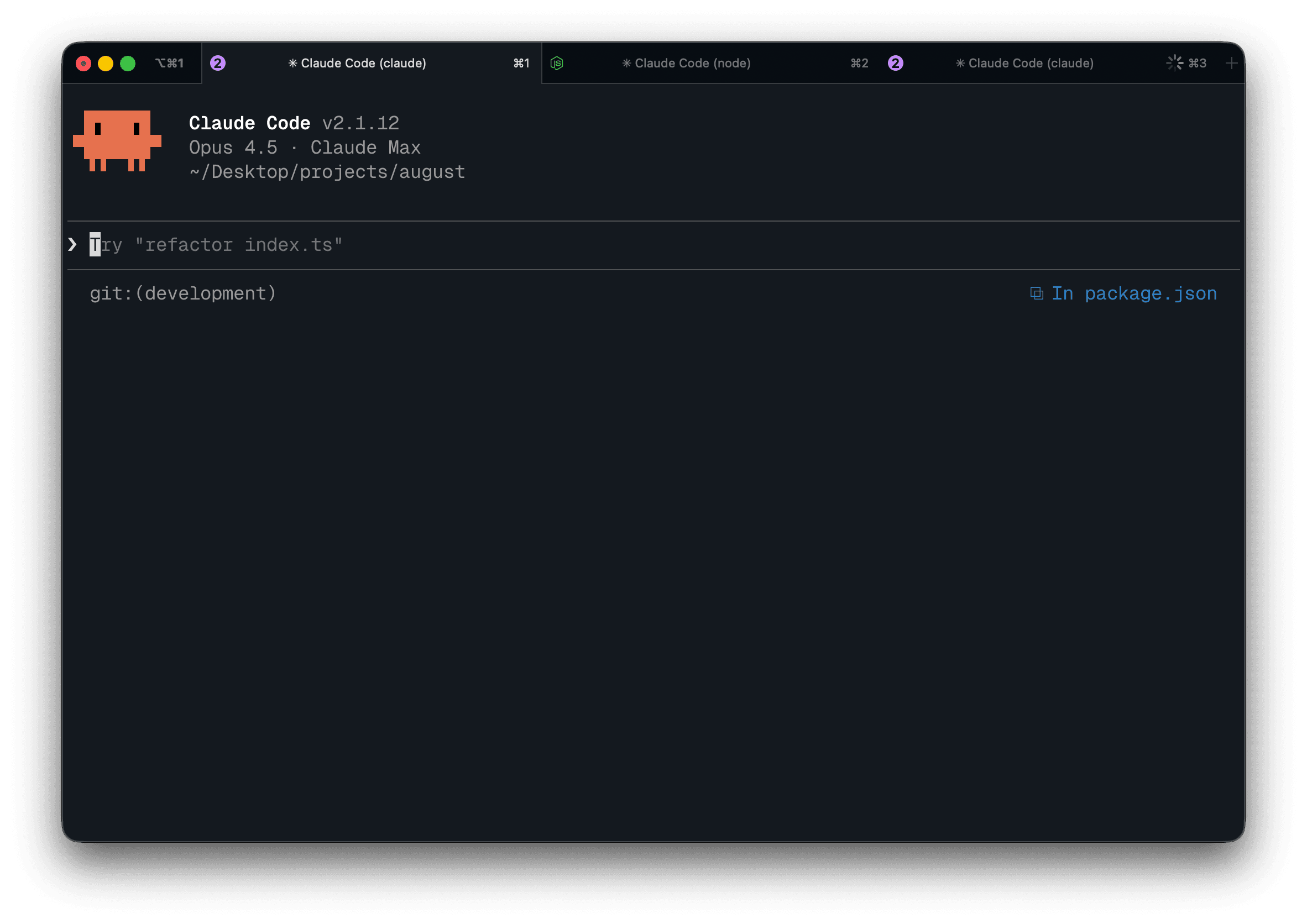

The first couple of things were fairly simple tasks with targeted edits. I had Claude open in Cursor itself as a terminal window.

This is also great because Claude Code has an IDE integration that shows you a file diff so you can accept it or reject it. This is great for building trust with the agent.

The key note you will learn here is that the agent is much more capable than you thought it would be. It can make mistakes sometimes, although it'll self-recover or you can just press escape and give it a new direction. Don't be afraid to do so; it's completely alright to do this multiple times until the agent gets the idea.

The repetition

You will find that there are some commands that you always ask it to run. In my case these were:

- Committing code

- Generating a PR

- Creating a plan from issue #

- Implementing a planned issue

I was actually fairly lazy going through the process of creating these commands, although I urge you to be proactive with these as they reduce your frustration with writing the same thing again and again.

At this point you'll also be frustrated by Claude repeating mistakes. For me, these are using pnpm instead of npm for things, trying to run the project when it can't check the outputs & trying to write migration files on its own.

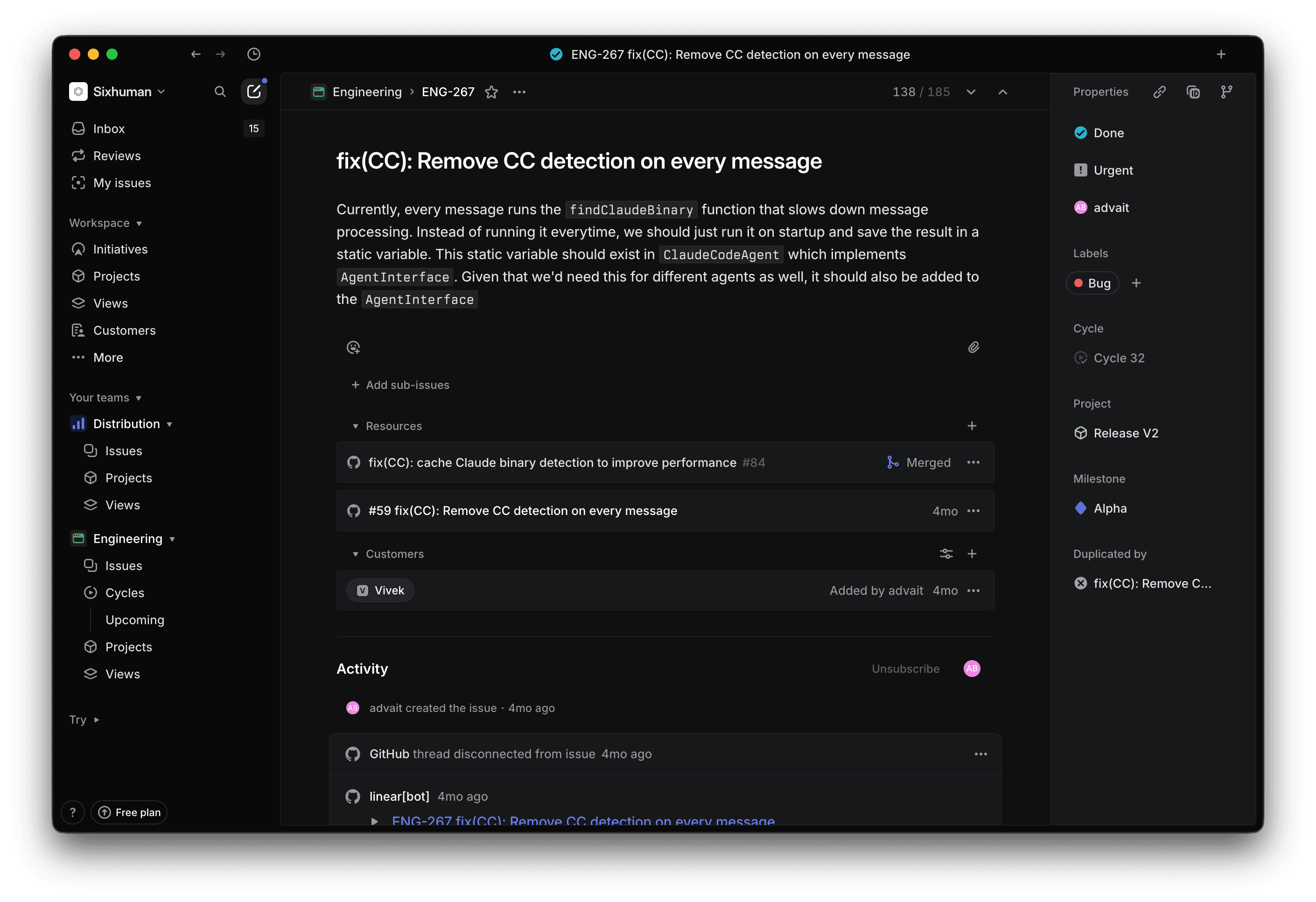

All these could be easily solved by maintaining a claude.md file in your repository. In a true "do as I say, not as I do" fashion, I haven't made this. I have a Linear issue where I want to solve all the repository setup problems, although as time goes on, I'm starting to think this isn't going to happen. So my recommendation here is make a quick and dirty version and then iterate on it.

The flow

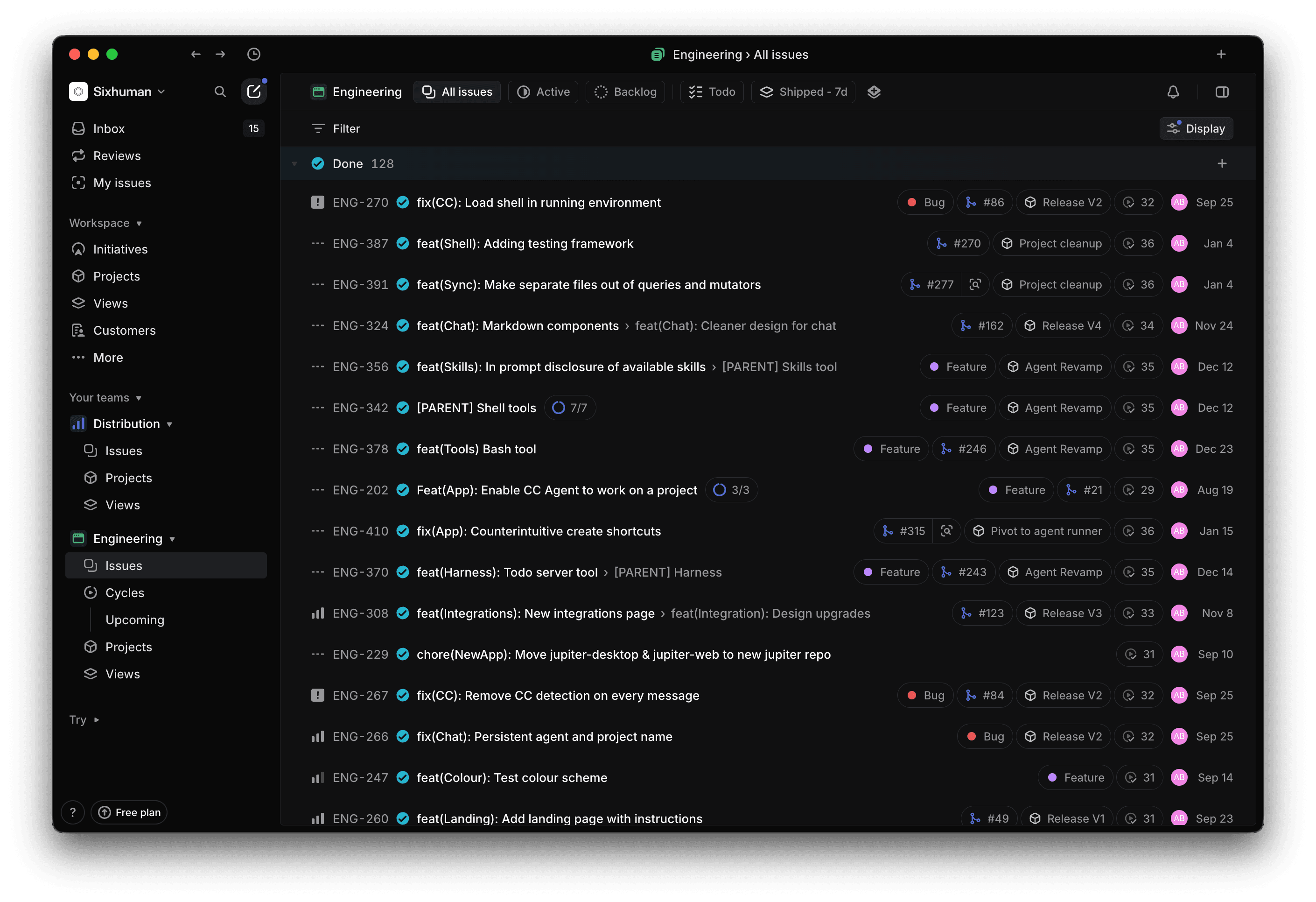

Even though I'm a single person working, I maintain a Linear workspace where I write all my issues and then I add additional context in the issue description.

This ended up helping a lot because I could just ask Claude to use the issue as a prompt. Linear has an MCP that you can add in Claude Code. It's fairly intuitive as well.

I have my GitHub app connected as well so Linear syncs issues to my repositories. This is great because Claude sometimes likes going to issues from GitHub.

The slope of planning

This is probably where you'll also discover the beauty of plan mode. When I initially started using Claude Code, the plan mode was pretty basic. I didn't like it much because I wanted to manually edit plans as well. So I asked Claude Code to add its plans in the issue comment. I would go through it, edit it or completely regenerate it.

The best way to think about plan mode is to imagine Claude implementing a feature as it going through a trajectory. With plan mode, you get to go through the trajectory much faster and without actual edits to your code. It's an easy way of eliminating obvious ways Claude can go wrong.

The new plan mode also has special prompts that make it much more useful. They also store the plan in a markdown file, so any edits you ask it to do won't result in a complete regeneration of the plan.

This is also where I personally started breaking large features into small Claude-able tasks, largely to get around context size limitations, usually based on modules. If a change spanned across my sync engine, backend, and frontend, I would ask a Claude instance to do one and then write a prompt for the next task.

Claude 1: Do work on sync engine + write prompt for backend

Claude 2: Do work on backend + write prompt for frontend

Claude 3: Do work on frontend.

This prompt would include information about the task we're doing, what steps have been completed, and what has to be taken next. As all context of my tasks is stored in the Linear issue description, this prompt can be short.

Context management with ease

Most of the time, these Claude-able tasks won't take too much context, so they're easy to manage. What's tough to manage is debugging with Claude. There are times when something just won't work, or will break with a different error message every time. It's still progress, although progress that's taking a while. This becomes a problem for the 200K context window that Claude has.

Unlike everyone, I haven't faced issues of context rot when the context window is filled because the only time I allow it to happen is when debugging, where the changes are pretty incremental.

I created a command called /evolve, which is information that I want the model to keep for its next instance based on my way of working. This works much better than the compaction that Claude automatically does.

NOTE: This is a temporary solution. I'm sure Anthropic is working on compaction as an active problem; once that comes out, I probably won't use this command.

Embracing the agent

The file editor kind of became irrelevant at this point. While still needed for editing things like colours and website copy, I stopped using it for much. Most code is generated by AI, the outputs are tested by me in dev mode, and once it's in a working state, code review is where I view the final version of code, dropping in comments where I feel changes are warranted. These are again fed into the agent, and then I merge the code.

Getting a code review tool will be very helpful here. I have tried CodeRabbit, although I didn't like how it worked. Claude also has a GitHub app that you can install. Although, by far the best experience I have had is with Greptile.

It's sure of its issues; it reviews code like a human would, by only pointing out plausible issues. It also doesn't become a try-hard while trying to find issues in everything. I have had times where Greptile has just okayed the PR without saying anything. This is obviously personal preference.

This is also where you could use something like the Claude Code mobile app and their web UI to fire off tasks in their code sandboxes. I have been able to complete a few things with these, although I think sandboxes have a long way to come and my codebase has to be a bit mature in terms of tests and initial setup (env variables, binaries that need to be bundled, etc.).

Multi-agent mode

I'll be the first to admit that the crowd spawning tens of agents, sometimes on a single task, seems performative, and on some level it is as well. Although I think there's some truth to being able to run multiple agents at once. For me, it just clicked one day, so let me tell you about it.

I had just completed a large project and I wanted to get some chill tasks out of the way. On top of my list was making tests for applications in my monorepo. I poured a glass of cocktail that was left over from my New Year's party and started with the first application.

To my surprise, Claude's plan was pretty simple in terms of how we can add tests and what tests we'd add. It seemed to cover a wide area as well. I gave it a go ahead and sat for a minute.

Only to realise that I could simultaneously write tests for all applications at once. I learned how worktrees could be created, made 4 of them, and 4 agents were writing tests for all my monorepo applications.

It felt pretty amazing going through this experience because for the first time Claude wasn't just making me fast—it was multiplying what I was trying to do.

In my head though it was a one-time win. There will probably be only rare cases where I would spawn agents at this scale. Oddly, I was wrong.

Today's setup

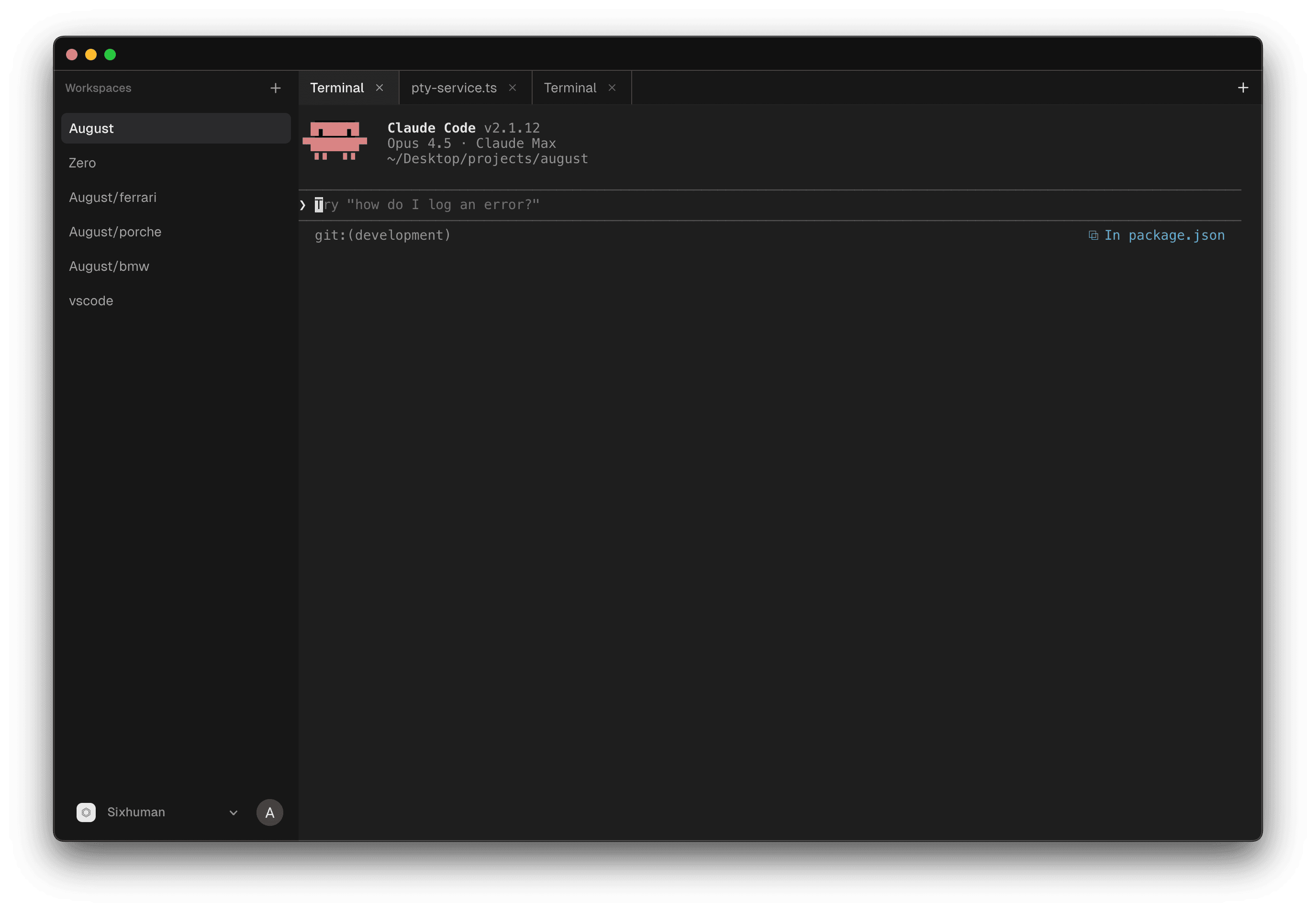

I have started taking user inputs in my Linear issues, and after a couple of user onboardings, I get at least 3–4 issues I could solve instantly and a couple of features I need to explore. I start my workday by spawning agents in my worktrees (which are named after car companies I like) and assigning them an issue each.

Initially this was done in multiple iTerm windows, although now I have created an application to manage this. It allows me to keep worktrees as separate workspaces and easily move between them with shortcuts. You can try it out at august.tech as well.

I move between them when they stop for a permission or question. Although the idea is that I get every agent to a point where the feature or issue assigned to them gets to a working stage and have it make a PR. I don't review these until 3 or 4 of them are created.

Then I switch to reviewer mode, where I'll go through these one by one, marking problems in code quality or an alternate approach altogether, which is then sent back to these agents using this prompt → Address comments on #[PR_NUMBER]

This also helps because by this time Greptile is done commenting on the issue, and the agent can then address comments from both. I also don't clear the context of the agent for this, as it has gotten me better results.

Next steps

The next thing I probably want to do is get to that damn claude.md file, add tests in my repository so everything is verifiable, and make it work such that it's easy for sandboxed agents to also use it. I would love to know how your Claude Code experience has been—was it the same as mine or different from mine?